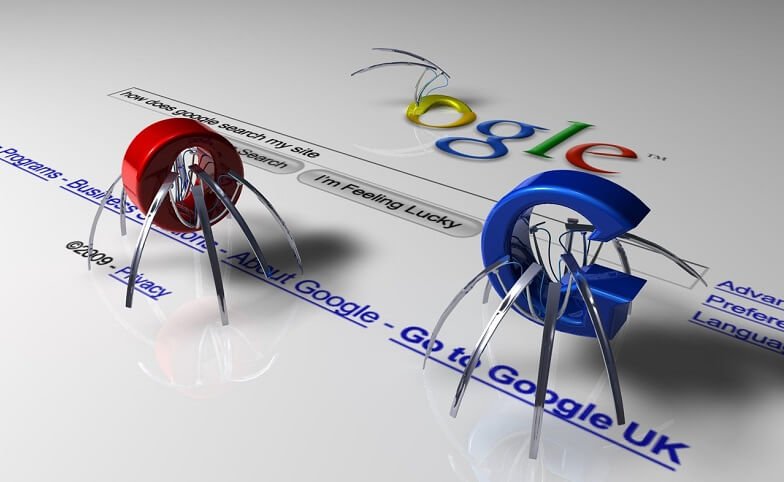

Yes, technology is so advanced that even Google can read your website. That’s right somehow Google can actually read the text on your website.

Well not literally. I mean, it can’t identify the context of your content, but it can identify words that are similar to each other, and that is how it determines where your website should be indexed.

This feature is known as the search engine bot, or spider. Its job is to crawl websites to see what pages interlink, find keywords that demonstrate the context of the page and these also determine the size of a website. Essentially, it is the bot that tells Google where to index a website. When you have everything on your website in order, then the bot easily crawls the site, finds repeating and relevant keywords, then indexes the page for the content it finds. The problem is that when you don’t optimize your website correctly, it may become difficult for the search engines to crawl it effectively.

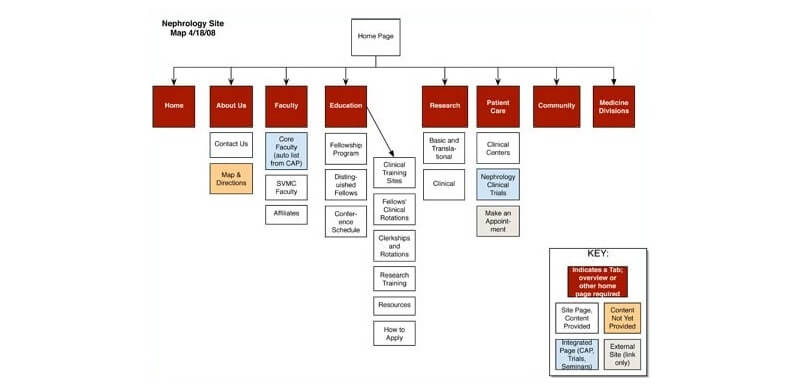

Why Is This Important?

Well, when Google or other search engines crawl a website effectively, they can map out all the pages and better index them within the search engine. A site that has proper navigation can help the bots deep crawl and index every single page. This is especially important as your website improves and grows. In some cases it happens naturally, but you can also speed the search engine crawl of your website up so that every page gets indexed correctly.

So how do you help your website along and get the search engines to navigate through it effectively? A good idea is to use these 10 tips to help the Googlebot index your site in a more efficient way.

1. Update Regularly

Google has made a point of rewarding sites that have fresh and updated content. In fact, content is by far the most important criteria for Google. A site that updates content on a regular basis is more likely to get crawled more frequently, and thereby get better indexed. To help things along, provide fresh content in the means of a blog on your website. It is a lot easier than constantly adding new webpages or changing your website data every couple of weeks.

A blog is certainly the easiest and most affordable way to produce new content on a regular basis, and this content can be in the form of video, image, Infographics and written material. If your website is very static, then at least consider adding a Twitter search widget or a twitter profile status widget, so that at least part of your site is constantly updating with new content.

2. Choose a Server with Good Uptime

Make sure you choose a good hosting server, one that has a good uptime. In other words, it is continually viewable by your audience. No one wants to surf the web and come across a website that doesn’t work. So choose a good hosting service that has a 99% plus uptime. This is very important as the Googlebot identifies web pages that tend to fall from the Internet, and when this happens too often, the bot adjusts its crawl rate and doesn’t crawl the entire site, plus it will also take longer to crawl new content. There are a lot of different hosting services that offer A 99% + uptime on the web. You just need to shop around and check out different reviews.

3. Add a Sitemap

Submitting a sitemap is one of the first things you should do to make your website discoverable by the Googlebot, yet many people don’t know what it is or how to add one to their site. WordPress offers a free plugin Google XML sitemap, however you can also create your own sitemap and add it to your website files, then submit it to Google.

4. Avoid Placing Copied Content on Your Website

When you copy content from another website you may decrease the rate at which the Googlebot crawls your site. This might result in less of your site being crawled, and in some cases, can even result in Google’s banning your side from the first page indexing.

To prevent this from happening you always want to offer fresh and relevant content that is not copied from any other website.

What kind of content am I talking about?

On the web content takes on many forms, yes it can be a written article or blog but it can also be an image, an infograph or a video.

5. Reduce the website load time

Essentially this means that the faster a website loads the better it will do in the search engines, and the faster the search engine bots can crawl it.

6. Block the Access to Unwanted Pages

Sometimes your website might have web pages that you aren’t really interested in having the general public find. These can be admin pages, membership pages, files and other types of pages. You want to keep the bots from crawling these because they won’t be indexed anyway. To save time you can edit the robots.txt files on those pages to stop the bots from crawling the useless parts of your website.

7. Monitor and Optimize the Search Engine Crawling of Your Website

You can monitor and optimize the Google crawl rate by using a Google tool called Webmaster Tools. This tool gives you specific search engine crawl statistics you can analyze. This allows you to make changes on your website to get a better crawl. But you can also manually control the Google crawl rate to make it faster or slower on your website. There is a feature here in the Google Webmaster Tools that allows you to do that, although I suggest you use it with caution and only when you seem to have issues with the robot.

8. Use A Ping Service

Pinging your pages after you add new content is a wonderful way to get the bot to find the new content on your site. There are manual ping services like Pingomatic that allow you to manually ping your content when you post it.

9. Submit to The Search Engines and other Directories

Yeah, experts are correct when they say that submitting to private directories is not a very effective way of doing SEO. However, there are reliable directories, such as those offered by the search engines and websites like Dmoz. These are reliable directories and very effective. You do want to submit your website to these directories as they are authority directories. When you list here, the bots go to these directories and then follow the links to the sites they list.

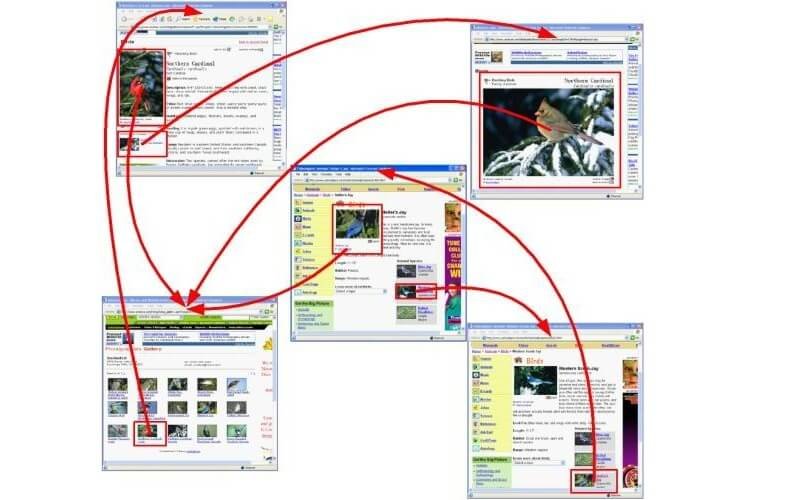

10. Interlink Your Blog Pages

Link your blog pages together. The benefits in doing this are twofold. First it gives you links that Google registers and gives you a better ranking for it; but it also helps by allowing the search engine bots to follow the links to other pages within your site quickly.

Conclusion

To get your content to list in the Search Engines you need the search engine bots to crawl your website in an efficient way. By following each of these tips you can get the bots to crawl your website and find your content quickly. This will help your site list organically in the search engines for the terms you choose.

Very nice info about indexing site on google